AI image generation has exploded in popularity over the past year. I’ve tested numerous interfaces and Stable Diffusion WebUI (often called AUTOMATIC1111) remains the most powerful option for local generation. This browser-based interface puts professional AI image creation within reach for anyone with a capable computer.

What is Stable Diffusion WebUI?

Stable Diffusion WebUI (AUTOMATIC1111) is a free browser-based interface that lets you generate, edit, and refine AI images using Stable Diffusion models on your own computer without coding knowledge or monthly fees.

I’ve spent countless hours exploring different Stable Diffusion interfaces. After setting up WebUI on three different systems and testing competitors like ComfyUI and Fooocus, I can confirm why AUTOMATIC1111 remains the community favorite. The balance between accessibility and advanced features is unmatched.

If you’re exploring local AI image generation options, WebUI offers the most complete package. You get access to thousands of community models, extensive customization options, and a constantly evolving feature set.

This guide focuses on what beginners actually need to know. I’ll skip the technical jargon and focus on getting you generating quality images quickly.

System Requirements for Stable Diffusion WebUI

| Component | Minimum | Recommended |

|---|---|---|

| GPU | NVIDIA GTX 1060 (6GB VRAM) | NVIDIA RTX 3060 (12GB VRAM) or better |

| System RAM | 8 GB | 16 GB or more |

| Storage | 15 GB free space | 50 GB SSD (models take space) |

| Operating System | Windows 10/11, Ubuntu Linux | Windows 11 for easiest setup |

| Python | Python 3.10.6 | Python 3.10.6 (installer included) |

NVIDIA GPUs work best with Stable Diffusion WebUI. The CUDA acceleration makes a massive difference in generation speed. I’ve seen generation times drop from 45 seconds to just 8 seconds when upgrading from a GTX 1660 to an RTX 3060.

For those looking to upgrade, check out our guide on the best GPUs for Stable Diffusion. The right GPU transforms your experience from frustrating waiting to nearly instant results.

AMD GPU Users: WebUI can work with AMD graphics cards but requires additional setup steps. Performance may vary significantly compared to NVIDIA equivalents.

Mac Users: M1/M2 Macs can run Stable Diffusion through WebUI but performance is limited. Consider dedicated Windows/Linux hardware for serious generation work.

How to Install Stable Diffusion WebUI?

Installation intimidates many beginners. I remember staring at command prompts wondering if I’d break something. The process is actually straightforward once you understand the steps.

Quick Summary: Installation requires Git for downloading files, Python 3.10.6 for running the software, and cloning the WebUI repository from GitHub. The entire process takes about 15-30 minutes depending on your internet speed.

Step 1: Install Required Software

Before installing WebUI itself, you need two tools: Git and Python. These are essential for downloading and running the WebUI files.

Download Git from git-scm.com. During installation, accept the default options. Git handles downloading the WebUI files from GitHub.

Download Python 3.10.6 specifically from python.org. Version compatibility matters—newer Python versions can cause errors with WebUI. During installation, check the box that says “Add Python to PATH.”

Step 2: Clone the WebUI Repository

Open Command Prompt on Windows. Navigate to where you want to install WebUI. I recommend creating a dedicated folder like “AI” on your drive.

- Navigate to your desired location: Type

cd C:\AI(create this folder first if needed) - Clone the repository: Type

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git - Wait for download: Git will download all necessary files (several hundred MB)

- Enter the directory: Type

cd stable-diffusion-webui

Step 3: Launch WebUI

For Windows users, the process is simple. Locate the file named “webui-user.bat” in the stable-diffusion-webui folder. Double-click this file to launch WebUI.

The first launch takes longer as Python downloads additional dependencies. I’ve seen first-time setup take anywhere from 5-20 minutes depending on internet speed. Subsequent launches are much faster.

Once loaded, your browser should automatically open to http://127.0.0.1:7860. This local address means WebUI is running on your computer.

Pro Tip: Create a desktop shortcut to “webui-user.bat” for easy access. I also renamed mine to “Launch Stable Diffusion” for clarity.

For detailed platform-specific instructions, check our guide on how to install Automatic1111 WebUI on Windows. It covers edge cases and common installation errors.

Linux Installation Overview

Linux users follow a similar process but use terminal commands instead of batch files. The main differences involve handling permissions and using “webui-user.sh” instead of the .bat file.

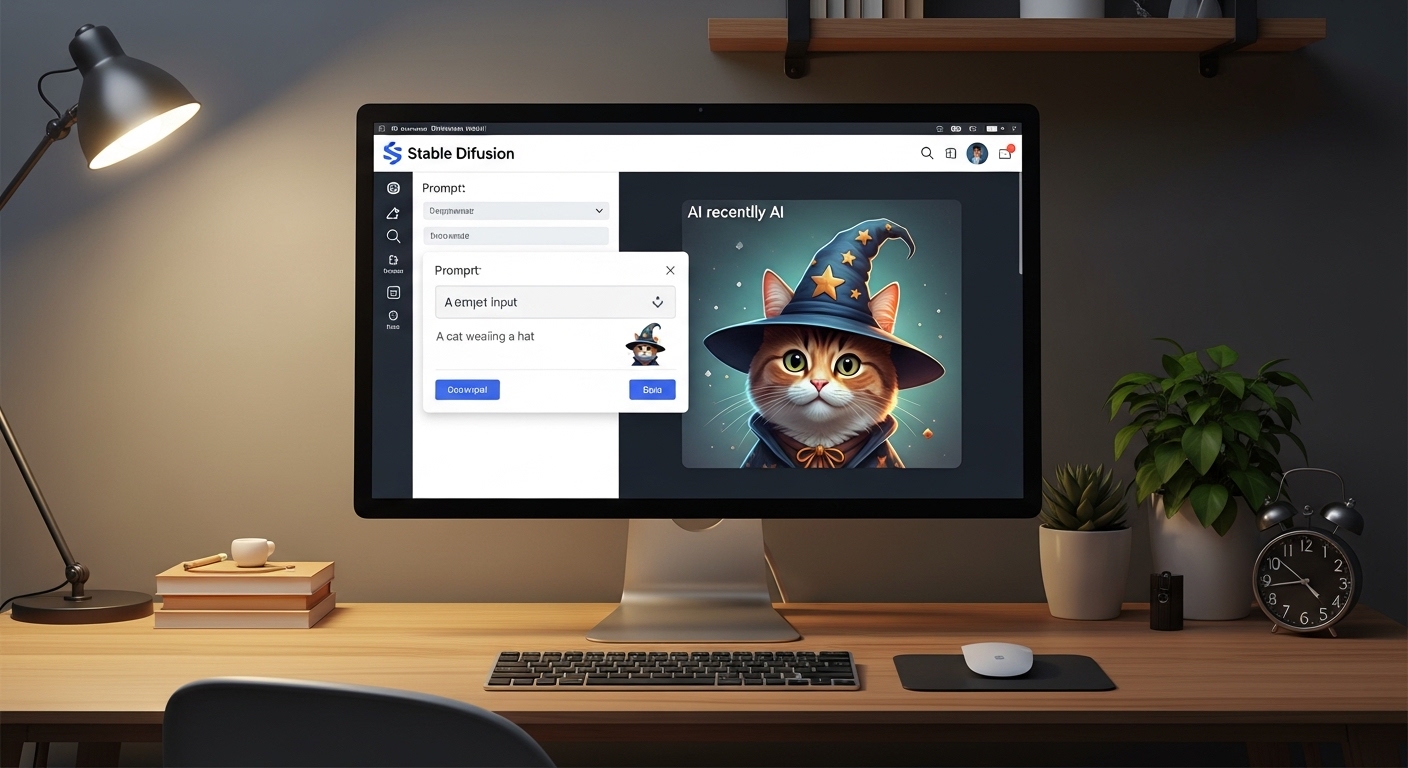

Understanding the WebUI Interface

When WebUI first loads, the interface can feel overwhelming. I spent my first few sessions clicking randomly and hoping for the best. Let me save you that confusion.

| Tab Name | Purpose | When to Use |

|---|---|---|

| txt2img | Generate images from text prompts | 90% of your work starts here |

| img2img | Transform existing images | Modifying, upscaling, or varying existing art |

| Inpaint | Edit specific image areas | Fixing faces, replacing objects, extending edges |

| Extras | Upscale and process images | Enlarging images without quality loss |

| PNG Info | View image generation data | Seeing what settings created an image |

txt2img is where you’ll spend most of your time. This tab converts text descriptions into entirely new images. It’s the core Stable Diffusion experience.

img2img takes an existing image and modifies it based on your prompt. I use this constantly when I like an image’s composition but want to change the style or add elements.

txt2img vs img2img: txt2img creates images from nothing but text. img2img requires a starting image and transforms it. img2img gives more control over composition but requires an input.

Inpainting is incredibly powerful. You can brush over an area and ask Stable Diffusion to regenerate just that portion. I’ve fixed awkward hands, changed clothing, and expanded image borders using inpaint.

Generating Your First Image with Stable Diffusion WebUI

Now for the exciting part. Let’s generate your first image.

Make sure you’re on the txt2img tab. You’ll see a large text box labeled “Prompt.” This is where you describe what you want to create.

Writing Your First Prompt

Prompt engineering is an art form itself. Start simple. For your first image, try something like:

“A serene mountain landscape at sunset, photorealistic, highly detailed, 4K”

This prompt includes the subject (mountain landscape), time (sunset), style (photorealistic), and quality indicators (highly detailed, 4K).

Below the main prompt, you’ll see a “Negative prompt” box. This tells Stable Diffusion what to avoid. A good starting negative prompt is:

“ugly, blurry, low quality, distorted, deformed”

Generating the Image

- Set your image size: Start with 512×512 for speed

- Set sampling steps: 20-30 steps is a good starting point

- Click “Generate”: The button is near the bottom right

- Wait for results: Generation time depends on your GPU

Your first generated image appears in the output area on the right. Right-click to save, or use the built-in save buttons beneath the image.

Key Takeaway: “Your first dozen images will likely be disappointing. This is normal. Stable Diffusion requires practice to understand how different prompts affect output. Stick with it—the learning curve is worth it.”

Essential Settings Explained

WebUI offers dozens of settings. Most beginners find this overwhelming. I certainly did. Let me focus on the settings that actually matter for your results.

| Setting | What It Does | Recommended Range |

|---|---|---|

| Sampling Steps | How many iterations to refine the image | 20-50 (more isn’t always better) |

| Sampler | Algorithm used for generation | DPM++ 2M Karras or Euler a |

| CFG Scale | How strictly to follow your prompt | 7-9 for most cases |

| Seed | Starting number for randomness | -1 for random, or reuse to recreate results |

| Image Size | Output dimensions in pixels | 512×512 or 512×768 for speed |

Sampling Methods Explained

The sampler choice affects both image quality and generation speed. After testing dozens of samplers across thousands of generations, I recommend two for beginners:

DPM++ 2M Karras: Excellent quality with reasonable speed. This is my default for most generations. It produces clean details without excessive artifacts.

Euler a: Very fast with good quality. Great for quick iterations when you’re experimenting with prompts.

CFG Scale: Short for “Classifier Free Guidance scale.” Lower values (3-5) give more creative freedom but may ignore your prompt. Higher values (12-15) follow instructions strictly but can look unnatural. Most images work well at 7.

Understanding Seeds

The seed determines the initial noise pattern that Stable Diffusion transforms into an image. Using the same seed with the same settings produces identical results.

I often find a generation I like but want to tweak slightly. By fixing the seed and changing only the prompt, I can make controlled adjustments. This is much more predictable than random regeneration.

Downloading and Installing Models

The default model included with WebUI produces decent results. But the real power comes from using community-created models trained on specific styles.

Key Takeaway: “Models are pre-trained AI brains. Different models excel at different styles—photography, anime, fantasy art, or specific aesthetics. Using the right model for your goal makes a huge difference.”

Where to Find Models

Civitai: The largest community model repository. Thousands of free models with preview images and user ratings. This should be your first stop.

Hugging Face: The original model hosting platform. Many official and research models live here alongside community uploads.

Installing Downloaded Models

- Download the model file: Usually .safetensors format (safer than old .ckpt files)

- Locate your models folder: stable-diffusion-webui/models/Stable-diffusion/

- Move the file: Copy your downloaded model into this folder

- Refresh WebUI: Click the refresh icon above the model dropdown

- Select your model: Choose it from the dropdown in the top left

I organize my models into subfolders by type (photography, anime, artistic). This makes it easier to find the right model for each project.

Common Issues and How to Fix Them

Even with perfect setup, things go wrong. I’ve encountered every common error over months of use. Here are the solutions I wish I’d known starting out.

Out of Memory Errors

“CUDA out of memory” is the most common error. It means your GPU doesn’t have enough video memory for your current settings.

Quick fixes:

- Reduce image resolution to 512×512

- Enable “Apply cross attention optimization” in Settings

- Lower batch size to 1

- Use –xformers launch option for significant VRAM savings

For comprehensive solutions, check our guide on how to fix low VRAM errors. It covers command-line arguments that can make WebUI runnable on cards with just 4GB of VRAM.

Slow Generation Speed

If generations take longer than 30 seconds, something needs optimization.

Speed improvements:

- Ensure you’re using your NVIDIA GPU, not CPU

- Enable xformers in your launch arguments

- Reduce sampling steps to 20-25

- Use faster samplers like Euler a for testing

Python and Installation Errors

Installation failures usually stem from Python version conflicts or missing dependencies.

Common fixes:

- Verify Python 3.10.6 specifically (not 3.11 or 3.12)

- Ensure “Add to PATH” was checked during Python installation

- Run webui-user.bat as Administrator if permissions issues occur

- Delete the “venv” folder and let WebUI recreate it

Model Not Showing Up

If a downloaded model doesn’t appear in the dropdown:

- Verify it’s in the correct folder (models/Stable-diffusion/)

- Check the file extension is .safetensors or .ckpt

- Click the refresh button next to the model dropdown

- Restart WebUI completely

This Guide is Perfect For

Complete beginners to AI image generation, users with NVIDIA GPUs, Windows users looking for straightforward installation, and anyone wanting to generate AI images without monthly subscription fees.

This Guide May Not Help

Users with integrated graphics or very old GPUs, those wanting one-click cloud-based solutions, or Mac-only users (dedicated hardware recommended for serious work).

Next Steps

Once you’ve generated your first few images, you’ll want to explore further. Consider comparing Stable Diffusion interfaces if you want to see alternatives like ComfyUI or Fooocus.

Advanced users can eventually learn to train their own LoRA models for custom styles. LoRAs let you fine-tune models on specific subjects, creating consistent characters or styles across generations.

The Stable Diffusion community moves fast. New models, techniques, and tools emerge weekly. WebUI receives regular updates adding features and improvements. The learning curve is real but so is the creative potential.

Frequently Asked Questions

Is Stable Diffusion WebUI free?

Yes, Stable Diffusion WebUI is completely free and open-source. You pay nothing for the software itself. The main costs are your computer hardware and electricity. Unlike subscription-based AI tools like Midjourney or DALL-E, once set up, you can generate unlimited images without ongoing costs.

How long does Stable Diffusion WebUI take to install?

Installation typically takes 15-30 minutes for most users. This includes installing Git and Python, cloning the WebUI repository, and downloading initial dependencies. First launch takes longer as dependencies install. Subsequent launches take just 30-60 seconds to start the interface.

Can I use Stable Diffusion WebUI without an NVIDIA GPU?

Yes, but with limitations. AMD GPUs can run WebUI but require additional configuration and may have compatibility issues. CPU-only mode is possible but extremely slow (5-10 minutes per image). Mac M1/M2 chips can run Stable Diffusion through special implementations but performance is limited. For the best experience, an NVIDIA RTX card is strongly recommended.

What is the difference between checkpoints and LoRAs?

Checkpoints are full AI models that determine the overall style and capability of your generations. LoRAs (Low-Rank Adaptation) are smaller add-on files that modify or enhance a checkpoint’s style. You can use multiple LoRAs with a single checkpoint to combine effects. Think of checkpoints as the foundation and LoRAs as style modifiers.

How do I make images higher resolution in WebUI?

Stable Diffusion was trained on 512×512 images, so larger resolutions can produce artifacts. For best results, generate at 512×512 then use the Extras tab to upscale. High-res fix in txt2img can also help by generating in two passes. Newer SDXL models natively support 1024×1024 resolution.

Why do my images look different each time with the same prompt?

Stable Diffusion uses random noise as a starting point unless you specify a seed. By default, each generation uses a different random seed, creating unique results. To recreate an image exactly, note the seed value from your generation info and reuse it. To vary slightly while maintaining similarity, use the same seed with a slightly different prompt.

What are negative prompts and when should I use them?

Negative prompts tell Stable Diffusion what to avoid in your image. Common negative prompts include quality issues like blurry, ugly, distorted, or unwanted elements. Always use negative prompts to improve image quality. They’re especially important for preventing common AI artifacts like extra limbs, strange text, or poor compositions.

How often should I update Stable Diffusion WebUI?

WebUI receives updates frequently, often multiple times per week. Major updates add new features and improvements. To update, open Command Prompt in your stable-diffusion-webui folder and run git pull. I recommend updating weekly or when you encounter a bug that might be fixed. Always backup your settings before major updates.

Leave a Reply