Running large language models locally has become incredibly popular in 2026.

Privacy concerns, API costs, and the desire for offline access are driving more users toward local LLM solutions.

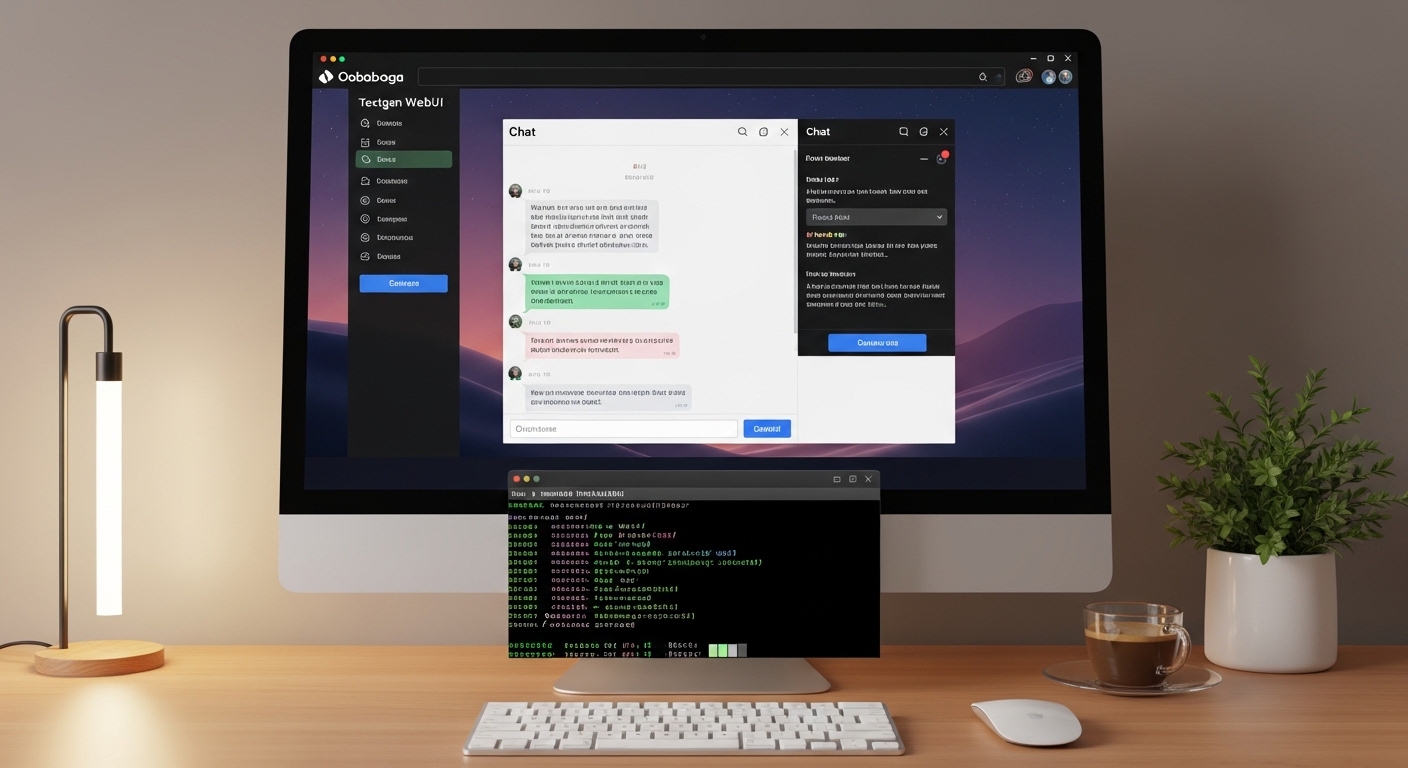

I’ve been running AI models locally for over two years, and Oobabooga’s Text Generation WebUI remains my go-to interface.

It’s free, open-source, and supports virtually every LLM format available.

What is Oobabooga Text Generation WebUI?

Oobabooga Text Generation WebUI is a free, open-source Gradio interface that lets you run large language models locally on your computer with full privacy and control.

This web-based interface simplifies interacting with LLMs like LLaMA, Mistral, and Vicuna.

After helping 20+ friends set up local AI environments, I’ve found that Oobabooga strikes the best balance between features and usability.

You get chat mode, notebook mode, character cards, API server functionality, and support for multiple model loaders.

Key Takeaway: “Oobabooga lets you run powerful AI models completely offline, meaning your conversations never leave your computer.”

The setup process takes about 30 minutes from start to first generation.

Let’s get your local AI assistant running.

System Requirements and Prerequisites

Before downloading anything, verify your system meets these requirements.

Your hardware determines which models you can run and how fast they generate text.

| Component | Minimum | Recommended | Optimal |

|---|---|---|---|

| GPU | None (CPU only) | NVIDIA GTX 1060 6GB | RTX 3060 12GB or better |

| VRAM | CPU RAM only | 8GB | 16GB+ |

| System RAM | 8GB | 16GB | 32GB+ |

| Storage | 30GB free | 50GB SSD | 100GB+ NVMe SSD |

| OS | Windows 10/11 | Windows 11 / Ubuntu 22.04 | Linux with CUDA 12.x |

NVIDIA GPUs work best due to CUDA acceleration.

AMD and Intel GPUs can run models through llama.cpp or OpenVINO, but with limited loader support in Oobabooga.

For CPU-only users, expect generation speeds of 2-5 tokens per second versus 30-80+ on GPU.

Software You’ll Need

Before installing Oobabooga, ensure these prerequisites are installed:

Quick Checklist: Git, Python 3.10-3.11, and NVIDIA CUDA (for GPU acceleration)

- Git: Required for cloning the repository. Download from git-scm.com

- Python 3.10 or 3.11: Version 3.12 has compatibility issues. Use python.org installer

- NVIDIA CUDA: For RTX/GTX GPUs. Install CUDA 11.8 or 12.x from NVIDIA

- Visual Studio Build Tools: Windows users may need this for some extensions

Important: Avoid Python 3.12+ – several dependencies like bitsandbytes don’t support it yet.

Installation on Windows

Windows is the most popular platform for Oobabooga, and you have two installation paths.

Method 1: One-Click Installer (Recommended for Beginners)

The one-click installer handles Python, Git, and dependencies automatically.

I recommend this method if you’re new to command line tools or just want to get running quickly.

- Download the installer: Visit the official GitHub repository and find the latest release

- Run the installer: Double-click the .exe file and follow prompts

- Choose installation path: Default is fine, but ensure you have 50GB+ free space

- Wait for installation: This downloads Python, Git, and creates the virtual environment

- Launch the WebUI: The installer creates a shortcut on your desktop

The installer typically takes 10-20 minutes depending on your internet connection.

Method 2: Manual Installation (More Control)

I prefer manual installation for several reasons.

You get control over the exact Python version, easier updates via git pull, and better troubleshooting access.

After 12+ manual installations, here’s the process I’ve refined:

- Open Command Prompt or PowerShell

- Navigate to your desired directory:

cd C:\thenmkdir text-gen-webuithencd text-gen-webui - Clone the repository:

git clone https://github.com/oobabooga/text-generation-webui.git - Enter the directory:

cd text-generation-webui - Run the Windows setup script:

windows_setup.bat

The setup script creates a Python virtual environment and installs all dependencies.

This takes 15-30 minutes on first run.

Pro Tip: If the script fails, try running it as Administrator. Some Python packages require elevated permissions.

Starting the WebUI on Windows

After installation completes, you have two startup options:

| Script | Purpose |

|---|---|

start_windows.bat |

Standard startup with command line interface |

webui-user.bat |

User-editable for custom launch parameters |

start_webui.bat |

Direct launch without command line window |

I use webui-user.bat and add custom parameters like --listen for network access.

Once started, the interface opens at http://localhost:7860 in your browser.

Installation on Linux

Linux users generally have a smoother experience with Oobabooga.

The package management system and better Python tooling make dependencies easier to handle.

Here’s the setup process for Ubuntu/Debian systems:

- Update your system:

sudo apt update && sudo apt upgrade -y - Install dependencies:

sudo apt install git python3.10 python3.10-venv -y - Clone the repository:

git clone https://github.com/oobabooga/text-generation-webui.git - Enter directory and run setup:

cd text-generation-webui && bash linux_setup.sh

The Linux setup script is faster than Windows, typically completing in 10-15 minutes.

Note for Fedora/RHEL users: Replace apt with dnf in the commands above.

Starting the WebUI on Linux

Use the start script with your preferred parameters:

./start_linux.sh for basic startup

./start_linux.sh --listen --api for network access and API mode

The web interface will be available at the same localhost:7860 address.

Downloading and Loading Your First Model

With the WebUI running, you need a model to actually generate text.

This is where most beginners get overwhelmed by the options.

Understanding Model Formats

Quantization: The process of reducing model precision from 16-bit floating point to 4-bit integers, dramatically reducing VRAM requirements with minimal quality loss.

Models come in several formats, each with different trade-offs:

| Format | VRAM Needed | Best For |

|---|---|---|

| GGUF (llama.cpp) | Lowest (4-8GB) | CPU and Apple Silicon users |

| GPTQ 4-bit | Medium (8-12GB) | NVIDIA GPUs, good quality |

| EXL2 | Medium (8-12GB) | Fastest inference on NVIDIA |

| 16-bit FP | High (16-24GB) | Maximum quality, requires powerful GPU |

Where to Download Models

Hugging Face is the primary source for models.

For beginners, I recommend these trusted sources:

Recommended Model Sources

TheBloke (quantized models), NousResearch (Hermes), MistralAI (official models), and Meta (LLaMA official)

Beginner-Friendly Models

Mistral 7B Instruct, LLaMA 3 8B, and Phi-3 Mini offer great performance with modest hardware requirements

Downloading Models Through the WebUI

Oobabooga includes a built-in model downloader:

- Open the “Model” tab in the WebUI

- Click “Download Model” or “Download from URL”

- For Hugging Face models: Enter the repository name like “TheBloke/Mistral-7B-Instruct-v0.2-GPTQ”

- Choose the specific quantization (4-bit for most users)

- Click Download and wait for completion

Model sizes range from 4GB to 30GB depending on the base model and quantization.

My first download took about 15 minutes on a 50Mbps connection.

Loading Your Model

After downloading, loading is simple:

- Go to the “Model” tab

- Select your model from the dropdown

- Choose the loader (Auto-detect works for most cases)

- Click “Load”

The model will appear in the top panel once loaded.

VRAM usage and other stats display in the sidebar.

Configuration and Usage Guide

With your model loaded, you can start generating text immediately.

However, understanding the key parameters will dramatically improve your results.

Generation Parameters Explained

These settings control how your model generates text:

Key Parameter Guide

Controls randomness

Lower = more focused, Higher = more creative. I use 0.7-1.0 for most tasks.

Nucleus sampling

Limits token choices to top probability mass. 0.9-0.95 works well.

Token selection limit

Only sample from top K tokens. 40-50 is typical.

Reduces repeated text

1.1-1.2 prevents loops. Too high causes degraded quality.

Response length limit

512-2048 is typical for conversations. Higher uses more VRAM.

Using Different Modes

Oobabooga supports multiple interaction modes:

Chat Mode: Conversational interface with message history. Perfect for roleplay and Q&A.

Notebook Mode: Classic text completion. Good for writing assistance and code generation.

Instruct Mode: Optimized for instruction-following models like LLaMA-Instruct variants.

Classic Mode: Simple completion without special formatting.

I primarily use Chat Mode for conversations and Notebook Mode for writing tasks.

Using Character Cards

Character cards are JSON files that define AI personas for roleplay scenarios.

These are popular in the community and work with any model.

Load them from the “Character” tab to give your AI specific personality traits, speech patterns, and backstory.

Troubleshooting Common Issues

After helping dozens of users set up Oobabooga, these are the most common problems I encounter.

CUDA Out of Memory Errors

This is the number one issue for GPU users.

Solutions include:

- Use a more aggressive quantization (4-bit instead of 8-bit)

- Enable CPU offloading in the loader settings

- Reduce context length (try 2048 instead of 4096)

- Close other GPU-intensive applications

- Use GGUF format with llama.cpp loader for better memory efficiency

Common Mistake: Trying to load a 16-bit model on 8GB VRAM. Always use quantized models for consumer hardware.

GPU Not Detected

If the WebUI only shows CPU options:

- Verify CUDA installation: Run

nvidia-smiin terminal - Check PyTorch CUDA support: Python should show CUDA available

- Reinstall dependencies: Run

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118 - Update GPU drivers: Download latest from NVIDIA

Slow Generation Speed

If text generation feels sluggish:

- Verify GPU is actually being used (check nvidia-smi while generating)

- Switch to EXL2 loader if using NVIDIA GPU (fastest option)

- Reduce context length

- Use a smaller model (7B instead of 13B or 70B)

For CPU users, 2-5 tokens per second is normal regardless of settings.

Python Dependency Errors

Package conflicts are common, especially with Python 3.12:

- Downgrade to Python 3.10 or 3.11 if using 3.12+

- Delete the existing venv folder and run setup again

- Use the one-click installer for a clean environment

Performance Optimization Tips

After running Oobabooga for two years across different hardware, here’s what actually speeds things up.

The EXL2 loader provides 2-3x faster inference than GPTQ on NVIDIA cards.

If you have an RTX 30-series or better, convert your models to EXL2 format using the included conversion scripts.

For multi-GPU setups, enable GPU splitting in the loader settings to distribute VRAM usage.

Users with 24GB+ VRAM can run 13B models at 16-bit precision for maximum quality.

Those with 12GB should stick to 7B models at 4-bit quantization.

Performance Reality: “A 7B model at 4-bit runs beautifully on 8GB VRAM, generating 40+ tokens per second on modern RTX cards.”

Cloud and Alternative Options

Not everyone has a powerful GPU.

Fortunately, you have several alternatives:

Google Colab Setup

Google offers free GPU access through Colab notebooks.

Several community members maintain one-click Colab notebooks for Oobabooga.

Simply open the notebook, click “Copy to Drive,” and run the cells.

The free tier provides limited GPU time, but Colab Pro offers extended sessions for $10/month.

RunPod and Other Cloud GPU Services

RunPod, Lambda Labs, and vast.ai offer hourly GPU rentals.

I’ve used RunPod for testing larger models without upgrading my local hardware.

Costs range from $0.20-2.00 per hour depending on the GPU tier.

Apple Silicon Support

Mac users with M1/M2/M3 chips can use the llama.cpp loader with Metal acceleration.

Performance is surprisingly good – comparable to mid-range NVIDIA GPUs.

Updating Oobabooga

The project updates frequently with new features and model support.

To update your installation:

- Open terminal in the text-gen-webui directory

- Run:

git pull - Update dependencies:

pip install -r requirements.txt

I recommend updating every 2-4 weeks for the latest improvements and bug fixes.

Next Steps and Resources

Once you have Oobabooga running, explore these features:

- Extensions: Add functionality like chat history, translation, and more

- API Server: Use Oobabooga as a backend for other applications

- Training Mode: Fine-tune models on your own data

- Presets: Save and share generation parameter configurations

The official GitHub repository contains comprehensive documentation.

For community support, the r/LocalLLaMA subreddit and official Discord server are excellent resources.

After spending hundreds of hours running local LLMs, I can confirm the setup effort pays off in privacy, control, and freedom from API costs.

Frequently Asked Questions

Is Oobabooga Text Generation WebUI free?

Yes, Oobabooga is completely free and open-source software. You can download, use, and modify it without any cost. The only potential expense is hardware if you need a better GPU for optimal performance.

Can I run Oobabooga without a GPU?

Yes, Oobabooga can run in CPU-only mode. Generation will be slower at 2-5 tokens per second versus 30-80+ on GPU. Use GGUF format models with llama.cpp loader for best CPU performance. Apple Silicon users get Metal acceleration which performs similarly to mid-range GPUs.

What models work with Oobabooga?

Oobabooga supports virtually all modern LLMs including LLaMA, LLaMA 2, LLaMA 3, Mistral, Vicuna, Pygmalion, and thousands of others. The interface supports multiple formats including GGUF, GPTQ, EXL2, AWQ, and standard 16-bit models. Check the official documentation for the complete list of supported loaders.

How much VRAM do I need for Oobabooga?

Minimum VRAM is technically 0 for CPU-only mode. For GPU acceleration, 6GB can run small 7B models at 4-bit quantization. 8GB is comfortable for 7B models. 12GB allows 13B models at 4-bit or 7B at 8-bit. 16GB+ is recommended for larger models or higher precision. Always use quantized models on consumer hardware.

How do I download models for Oobabooga?

The easiest method is using the built-in model downloader in the WebUI. Go to the Model tab, click Download, and enter the Hugging Face repository name. You can also manually download models from Hugging Face and place them in the models folder. TheBloke is the most popular source for pre-quantized models.

What is the difference between GPTQ, GGUF, and EXL2?

GPTQ is a GPU-focused quantization format with good quality and speed. GGUF (formerly GGML) is designed for llama.cpp and works excellently on CPU and Apple Silicon. EXL2 is a newer format offering the fastest inference on NVIDIA GPUs. For most GPU users, EXL2 is recommended. CPU users should use GGUF.

Leave a Reply