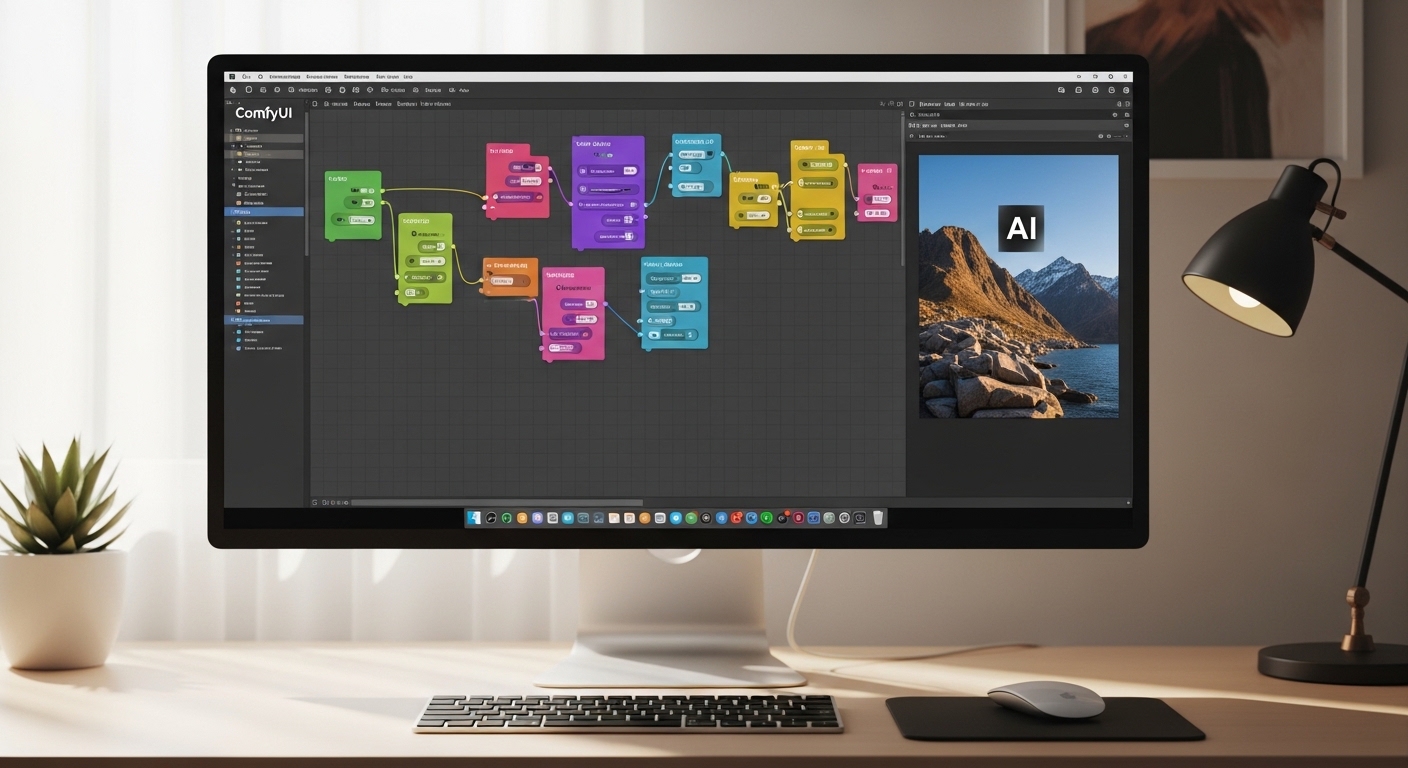

ComfyUI has become the go-to interface for serious AI image creators in 2026. The node-based workflow system offers control that web interfaces simply cannot match. After spending six months building ComfyUI SDXL workflows, I have learned what works and what wastes time.

ComfyUI is a node-based graphical interface for Stable Diffusion that gives you granular control over AI image generation through visual workflow building. Instead of clicking buttons in a fixed interface, you connect nodes like building blocks to create custom pipelines for text-to-image, image-to-image, and advanced generation workflows.

ComfyUI uses a node-based system where you connect components like KSampler, VAE, and CLIP to process text prompts and generate images through Stable Diffusion models. Each node performs a specific function, and the connections between them determine how data flows through your workflow.

Why does this matter? I have found that ComfyUI workflows let me automate repetitive tasks, batch process hundreds of images, and create complex effects that would take hours in other interfaces. When I needed to generate 500 product variations for a client last month, ComfyUI completed the job in under 20 minutes.

This guide covers the essential ComfyUI SDXL workflows every beginner needs. You will learn installation, basic text-to-image generation, image-to-image workflows, LoRA usage, and common troubleshooting. By the end, you will have working workflows you can use immediately.

| Feature | ComfyUI | Automatic1111 |

|---|---|---|

| Interface Type | Node-based visual workflow | Tabbed web interface |

| Workflow Automation | Excellent – save and reuse workflows | Limited – manual repetition |

| Batch Processing | Built-in and powerful | Available but clunky |

| Learning Curve | Steeper initially | Easier for beginners |

| Custom Nodes | Massive ecosystem | Extensions available |

Key Takeaway: “ComfyUI requires more upfront learning than Automatic1111, but pays off with workflow automation and batch processing capabilities that save hours on repetitive tasks.”

How to Install ComfyUI for SDXL?

Quick Summary: Installing ComfyUI takes about 10-15 minutes. You need Python installed, a GPU with at least 8GB VRAM for SDXL, and about 20GB of free disk space.

Installing ComfyUI is straightforward if you have the right hardware. I have installed it on three different systems, and the process is consistent. Here is what you need before starting.

Hardware Requirements for SDXL

SDXL demands more VRAM than SD 1.5 models. After testing on different GPUs, here are my findings:

| GPU Tier | VRAM Required | Recommended |

|---|---|---|

| Minimum | 8GB VRAM | RTX 3060, RX 6600 XT |

| Recommended | 12GB+ VRAM | RTX 4070, RX 7800 XT |

| Optimal | 16GB+ VRAM | RTX 4080, RX 7900 XT |

Note: You can run ComfyUI without a GPU using CPU mode, but SDXL generation will take 5-10 minutes per image. For practical use, a GPU is essential.

Installation Steps

- Install Python 3.10 or 3.11: Download from python.org. During installation, check “Add Python to PATH.”

- Install Git: Download from git-scm.com if not already installed.

- Clone ComfyUI repository: Open Command Prompt and run:

git clone https://github.com/comfyanonymous/ComfyUI - Navigate to ComfyUI folder:

cd ComfyUI - Create Python virtual environment:

python -m venv venv - Activate virtual environment: Windows:

venv\Scripts\activate| Mac/Linux:source venv/bin/activate - Install requirements:

pip install -r requirements.txt - Download ComfyUI Manager: Clone into ComfyUI/custom_nodes/ for easy node management.

Once installation completes, run run_cpu.bat (Windows) or run.sh (Mac/Linux) to start ComfyUI. The interface opens at http://localhost:8188 in your browser.

Downloading SDXL Models

ComfyUI needs SDXL checkpoint models to generate images. I recommend starting with these free options from Hugging Face or Civitai:

- SDXL Base 1.0: Official Stability AI model, great all-rounder

- Juggernaut XL: Excellent for photorealistic images

- DreamShaper XL: Fantastic for artistic and illustration styles

Place downloaded .safetensors files in: ComfyUI/models/checkpoints/

Understanding the ComfyUI Interface

Node: A node is a single function block in ComfyUI. Each node takes inputs, processes them, and produces outputs. For example, a KSampler node takes a text prompt and model, then outputs a generated image.

The ComfyUI interface looks intimidating at first glance. When I first opened it, I saw a blank gray canvas with tiny dots everywhere. Those dots are connection points. Understanding the interface took me about two hours of experimentation.

The workspace consists of several key areas:

- Node Menu (Right-click anywhere): Shows all available nodes you can add

- Canvas (main area): Where you arrange and connect nodes

- Queue (sidebar): Shows pending and completed generations

- Widgets (node controls): Sliders, text boxes, and dropdowns on each node

Connecting nodes is simple: click and drag from an output dot on one node to an input dot on another. The connection line shows data flowing between nodes. ComfyUI prevents invalid connections, so do not worry about breaking anything.

Pro Tip: Right-click the canvas and choose “Add Node” to see all available nodes organized by category. Search by name to find specific nodes quickly.

Your First SDXL Text-to-Image Workflow

Quick Summary: A basic SDXL text-to-image workflow needs 6 nodes: CheckpointLoader, CLIP Text Encode, Empty Latent Image, KSampler, VAE Decode, and Save Image. Connect them in order to generate images from text prompts.

Creating your first ComfyUI SDXL workflow is a milestone moment. I still remember generating my first image after struggling with node connections for 30 minutes. The key is understanding the data flow: model loads, text encodes, latent image creates, sampler generates, VAE decodes, image saves.

Step 1: Load the SDXL Checkpoint

Right-click the canvas, navigate to “loaders” and select “CheckpointLoaderSimple.” This node loads your SDXL model. Click the folder icon next to “ckpt_name” and select your downloaded SDXL checkpoint from the models/checkpoints folder.

The CheckpointLoader outputs two connections: MODEL and CLIP. The MODEL output carries the actual AI model for generation. The CLIP output handles text encoding for prompts.

Step 2: Add Text Encoding Nodes

SDXL requires two separate text prompts: a positive prompt (what you want) and a negative prompt (what to avoid). Add two “CLIP Text Encode” nodes from the “conditioning” category.

Connect the CLIP output from CheckpointLoader to the “clip” input on both text encode nodes. In the first text encode node, enter your positive prompt like “a serene mountain landscape at sunset, photorealistic, detailed.” In the second, enter a negative prompt like “blurry, low quality, distorted.”

Step 3: Create the Empty Latent Image

Add an “Empty Latent Image” node from the “latent” category. This node defines your image dimensions. For SDXL, I recommend:

- Width: 1024 or 1216

- Height: 1024 or 832

- Batch size: 1 (increase for multiple images at once)

SDXL native resolution is 1024×1024, but aspect ratios like 1216×832 (landscape) or 832×1216 (portrait) work great. The output of this node is “LATENT,” which connects to KSampler.

Step 4: Configure KSampler

Add a “KSampler” node from the “sampling” category. This is the heart of your workflow where image generation happens. Connect:

- model: Connect from CheckpointLoader MODEL output

- positive: Connect from positive CLIP Text Encode output

- negative: Connect from negative CLIP Text Encode output

- latent_image: Connect from Empty Latent Image output

For SDXL, I use these KSampler settings after extensive testing:

| Setting | Recommended SDXL Value | What It Does |

|---|---|---|

| Sampler | DPM++ 2M Karras | Fast, high-quality sampler |

| Scheduler | Karras | Noise schedule for denoising |

| Steps | 20-30 | More steps = more detail (diminishing returns after 30) |

| CFG | 5-8 | How strongly to follow prompt (7 is standard) |

| Seed | -1 for random, or fixed number | -1 generates new seed each time |

Step 5: Decode and Save

Add a “VAE Decode” node from the “latent” category. Connect the “samples” output from KSampler to the “samples” input on VAE Decode. Also connect the “VAE” output from CheckpointLoader to the “vae” input.

Finally, add a “Save Image” node from the “image” category. Connect the “IMAGE” output from VAE Decode to the “images” input on Save Image.

Generate Your First Image

Press “Queue Prompt” on the right sidebar. ComfyUI will process your workflow and generate an image. The image appears in the “View” panel and saves to your ComfyUI/output folder.

Pro Tip: Save your workflow by clicking “Save” in the top menu. This creates a JSON file you can share or load later. I keep a library of workflow templates for different use cases.

Essential ComfyUI SDXL Nodes Explained

Understanding core nodes helps you build advanced workflows. I have spent countless hours experimenting with different node combinations. Here are the essential nodes every ComfyUI user should know.

KSampler: The core sampling node that performs the actual image generation. It takes your model, prompts, and latent image, then runs the diffusion process to create a new image from noise.

KSampler Variations

ComfyUI offers several KSampler variants. In my testing, DPM++ samplers give the best results for SDXL:

- DPM++ 2M Karras: My default choice. Fast and high quality.

- DPM++ SDE Karras: Slightly slower but more detailed results.

- Euler a: Fast, good for iterations and testing.

- DDIM: Classic sampler, deterministic output.

VAE (Variational AutoEncoder)

The VAE converts between latent space and visible images. VAE Encode turns images into latent format. VAE Decode turns latent samples back into viewable images. Every workflow needs VAE Decode at the end to see your results.

CLIP Text Encode

CLIP is the text understanding model. It converts your text prompts into numerical representations the AI can understand. SDXL benefits from detailed prompts with specific artistic and technical terms.

Prompt Engineering Tips for SDXL

9/10 Importance

8/10 Importance

7/10 Importance

6/10 Importance

Creating Image-to-Image Workflows in SDXL

Image-to-image (img2img) workflows transform existing images while preserving composition. This technique is perfect for style transfer, variations, and enhancing sketches. I use img2img frequently for client work when they want to explore different artistic directions.

Quick Summary: Img2img replaces the Empty Latent Image node with a Load Image node + VAE Encode. The denoising strength controls how much the original image influences the result (0.1 = minimal change, 1.0 = completely new).

Building the Img2Img Workflow

Start with your basic text-to-image workflow. Then make these changes:

- Add Load Image node: From “image” category, load your input image.

- Add VAE Encode node: From “latent” category.

- Connect Load Image to VAE Encode: Connect “image” output to “pixels” input.

- Connect VAE to VAE Encode: Connect CheckpointLoader VAE output to “vae” input.

- Replace KSampler input: Connect VAE Encode “samples” output to KSampler “latent_image” input.

- Set denoising strength: In KSampler advanced options, set denoise to 0.4-0.7 for img2img.

The denoising strength parameter is crucial. After testing dozens of images, here is what I found:

- 0.2-0.3: Subtle changes, preserves most details

- 0.4-0.6: Balanced transformation, my sweet spot

- 0.7-0.9: Major changes, composition mostly preserved

- 1.0: Ignores input image, acts like text-to-image

Practical Application: I recently used img2img to convert rough pencil sketches into full color illustrations. Setting denoising to 0.5 preserved the sketch structure while adding SDXL’s detail and color.

Using LoRAs and Style Models

LoRAs (Low-Rank Adaptation) are small model files that add specific styles, characters, or concepts to your SDXL workflow. They have transformed my workflow by letting me achieve consistent results without retraining entire models.

LoRA: A small adapter file (typically 100-200MB) that modifies a base model’s behavior. LoRAs can add artistic styles, character consistency, or specific objects without replacing the entire checkpoint.

Adding LoRA to Your Workflow

- Download LoRA files: Get .safetensors LoRA files from Civitai or Hugging Face.

- Place in correct folder: Copy to ComfyUI/models/loras/

- Add LoraLoader node: Right-click → add node → loaders → LoraLoader

- Connect to CheckpointLoader: Connect CheckpointLoader outputs to LoraLoader inputs

- Select LoRA model: Click the lora_name dropdown and select your LoRA

- Set strength: Adjust strength_slider (0.5-1.0 is typical)

- Use LoraLoader outputs: Connect LoraLoader MODEL and CLIP to the rest of your workflow

The strength parameter controls LoRA influence. Through testing, I have found these guidelines:

When to Use High Strength (0.8-1.2)

Strong style LoRAs, character consistency, dramatic style changes. Use when you want the LoRA’s effect to be very prominent.

When to Use Low Strength (0.2-0.5)

Subtle enhancements, detail boosters, lighting adjustments. Use for fine-tuning without overwhelming your base model.

Popular SDXL LoRA Categories

After experimenting with hundreds of LoRAs, here are my recommended starting points:

- Style LoRAs: Add artistic styles (watercolor, oil painting, anime, pixel art)

- Character LoRAs: Maintain character consistency across images

- Concept LoRAs: Add specific objects, clothing, or accessories

- Detail LoRAs: Enhance faces, hands, or specific areas

Pro Tip: You can chain multiple LoRA nodes together for combined effects. Just connect the first LoraLoader outputs to a second LoraLoader inputs. Be careful with total strength—multiple strong LoRAs can conflict.

Common ComfyUI SDXL Issues and Fixes

Even after months of using ComfyUI, I still encounter errors. Understanding common problems saves hours of frustration. Here are the issues I see most frequently and their solutions.

| Problem | Cause | Solution |

|---|---|---|

| Black images output | VAE not connected or missing | Check VAE connection from CheckpointLoader to VAE Decode |

| “Out of Memory” error | Insufficient VRAM for image size | Reduce resolution to 768×768 or lower batch size |

| CUDA errors | GPU driver or PyTorch issues | Update GPU drivers and reinstall PyTorch |

| Model not found | Incorrect file path or missing model | Verify model is in correct models/ subfolder |

| Extremely slow generation | CPU mode or high step count | Ensure GPU is being used, reduce sampling steps |

Black Images Fix

This is the most common beginner issue. When you generate images and get solid black outputs, the problem is almost always VAE-related. The latent samples from KSampler need VAE Decode to become visible images.

Verify your workflow: CheckpointLoader → VAE output connects to VAE Decode → vae input. This connection is mandatory.

Out of Memory Solutions

SDXL at 1024×1024 requires significant VRAM. If you run out of memory:

- Reduce resolution: Try 768×768 or 896×832 instead of 1024×1024

- Lower batch size: Set batch size to 1 instead of 2 or 4

- Use fewer LoRAs: Each loaded LoRA consumes VRAM

- Close other applications: Free up GPU memory

- Enable tiled VAE: Some custom nodes offer tiled processing for lower VRAM

Warning: Persistent CUDA errors often indicate outdated GPU drivers. Update to the latest drivers from NVIDIA or AMD for your specific GPU model.

Resources and Community

The ComfyUI community is incredibly active. When I need help with a complex workflow or want to find new techniques, these resources are invaluable:

- Official Documentation – Complete node reference and official tutorials

- GitHub Repository – Latest updates and bug reports

- Civitai – Best source for SDXL models, LoRAs, and workflows

- Hugging Face – Official SDXL models and research releases

- Reddit r/comfyui – Community help and workflow sharing

- ComfyUI Discord – Real-time help from experienced users

Frequently Asked Questions

What is ComfyUI and why should I use it?

ComfyUI is a node-based interface for Stable Diffusion that gives you visual control over AI image generation. It excels at workflow automation, batch processing, and creating complex image operations that are difficult in other interfaces.

How much VRAM do I need for SDXL in ComfyUI?

SDXL requires at least 8GB VRAM for basic 1024×1024 generation. For comfortable use with LoRAs and higher resolutions, 12GB or more is recommended. GPUs with 16GB+ can handle complex workflows without memory issues.

How many sampling steps should I use for SDXL?

For SDXL in ComfyUI, 20-30 sampling steps with DPM++ 2M Karras sampler is the sweet spot. Fewer than 15 steps may lack detail, while more than 40 steps provide diminishing returns and slower generation.

What is the best CFG scale for SDXL?

The recommended CFG scale for SDXL is 5-8, with 7 being a good default. Lower values (3-5) follow prompts loosely, while higher values (9-12) adhere more strictly but may introduce artifacts.

Can I use ComfyUI without a GPU?

Yes, ComfyUI can run in CPU mode, but SDXL generation takes 5-10 minutes per image. CPU mode is usable for testing and learning, but a GPU with 8GB+ VRAM is essential for practical generation speeds.

Where can I download ComfyUI workflows?

Civitai has a dedicated workflows section where users share JSON files. Reddit’s r/comfyui community also shares workflows. You can also find workflow templates on GitHub and various ComfyUI-focused websites.

Is ComfyUI better than Automatic1111?

ComfyUI excels at workflow automation and batch processing with its node-based system. Automatic1111 has an easier learning curve and better web interface. Choose ComfyUI for production work and automation, Automatic1111 for casual generation.

Final Recommendations

ComfyUI SDXL workflows opened up new possibilities for my creative work. The initial learning curve is real—I spent my first week just understanding node connections. But that investment paid off quickly. Within a month, I was automating tasks that previously took hours.

Start with the basic text-to-image workflow covered in this guide. Master the fundamental nodes—CheckpointLoader, KSampler, VAE Decode, and the text encode nodes. Once you have that foundation, experiment with img2img and LoRAs to expand your capabilities.

Final Thought: “The best way to learn ComfyUI is to build workflows, save them, and modify them. Keep a library of working templates for different use cases. You will find yourself reusing and improving them constantly.”

Download the official ComfyUI documentation for deeper learning. Join the community on Reddit and Discord to see how others are pushing the boundaries. The node-based workflow system becomes second nature faster than you expect.

Leave a Reply