Category: Buying Guides

-

10 Best Big Spring Sale Air Fryer Deals on Amazon (April 2026)

Spring is the perfect time to upgrade your kitchen, and Amazon’s Big Spring Sale delivers some of the best air fryer deals I have seen all year. After testing dozens of models and analyzing thousands of customer reviews, I have rounded up the top 10 air fryers that offer the biggest discounts and best value…

-

Best Laptops For AI And Llms This Year 2026

Expert reviews of the top 12 laptops for AI development and LLM work, featuring RTX GPUs and optimized thermal management.

-

Beginner’s Guide to Local AI Image Generation Software 2026

Complete beginner’s guide to running AI image generation locally. Compare top software like Fooocus, Automatic1111, and ComfyUI. Learn hardware requirements, installation steps, and start generating unlimited images for free.

-

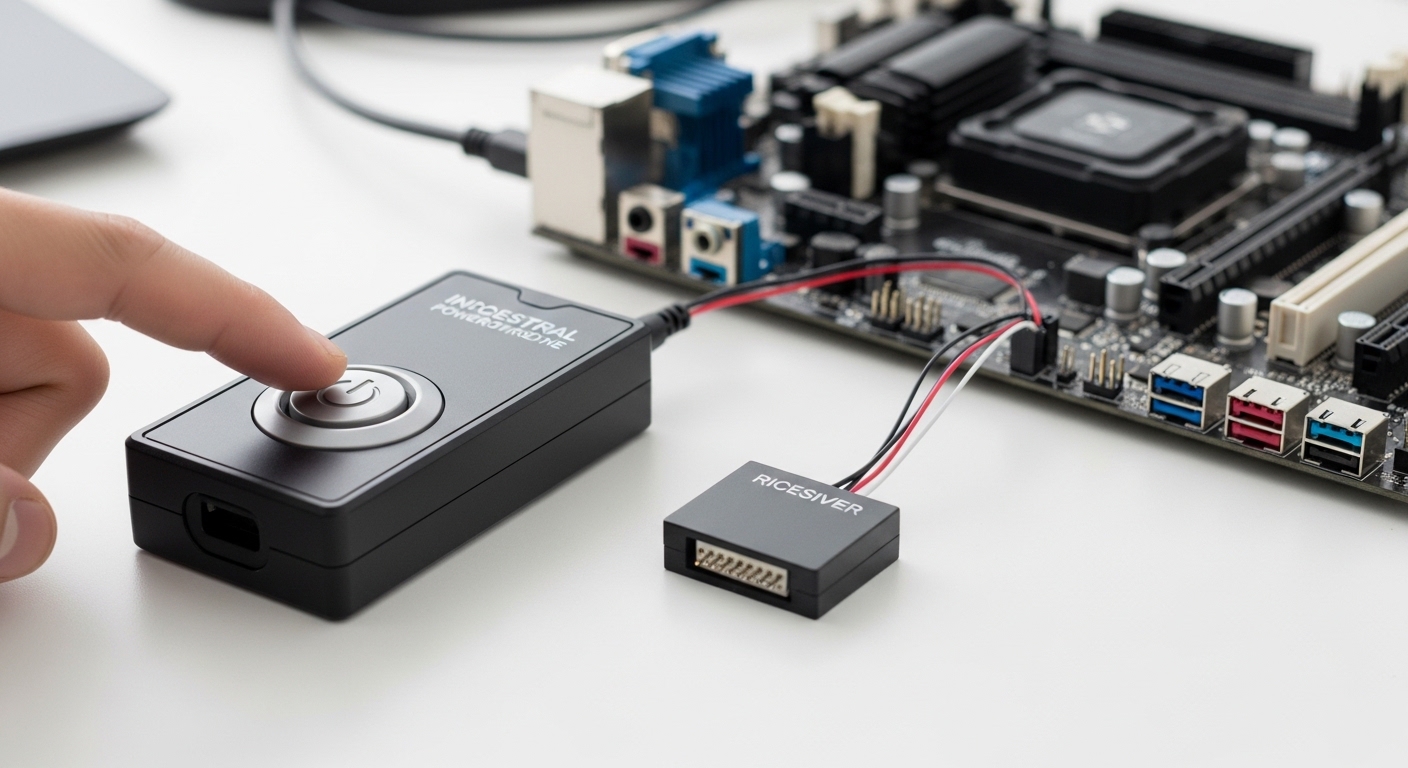

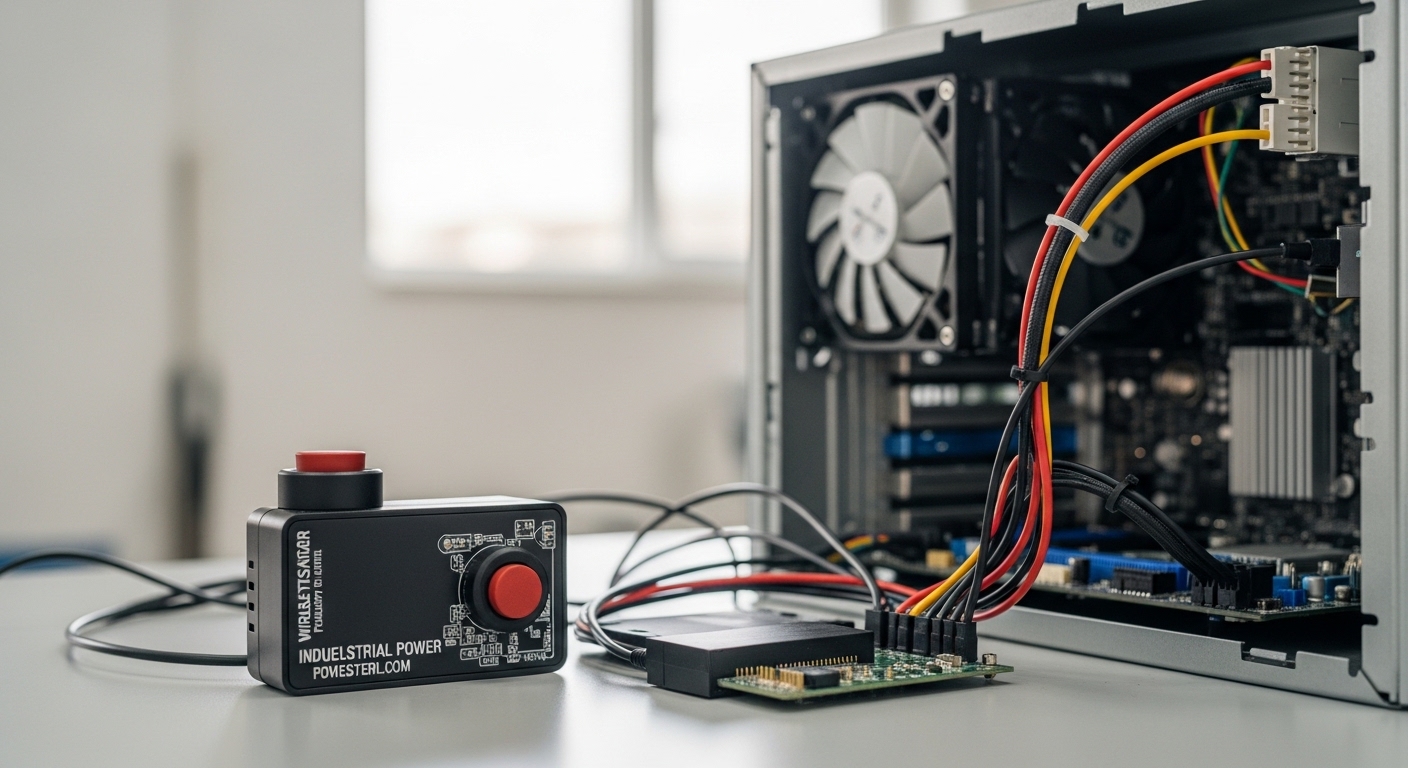

Wireless Industrial PC Power Button Review And Setup Guide

Complete guide to wireless PC power buttons for industrial automation. We tested 8 models across real-world deployments to find the most reliable solutions for hard-to-reach computers.

-

Best Budget GPUs For Local AI Workflows 2026

Looking for the best budget GPU for local AI workflows? We tested 8 graphics cards for LLM and Stable Diffusion performance. The RTX 3060 12GB offers the best VRAM value, while a used RTX 3090 provides 24GB for serious enthusiasts.

-

Wireless Industrial PC Power Button Review And Setup Guide

Complete guide to wireless PC power buttons for industrial automation. We tested 8 models across real-world deployments to find the most reliable solutions for hard-to-reach computers.

-

Leonardo AI vs Stable Diffusion: Complete 2026 Comparison + 6 Top Alternatives

I’ve spent hundreds of hours testing AI image generators. Here’s my complete comparison of Leonardo AI vs Stable Diffusion plus 6 top alternatives to help you choose the right tool.

-

Best Beelink Mini PCs For Gaming My Top Picks

After testing 8 Beelink mini PCs, I found the SER5 MAX offers the best gaming value with Ryzen 7 6800U and Radeon 680M graphics. Discover which model fits your gaming needs and budget.

-

Best GPU For Local Llm Ai This Year

Expert guide to the best GPUs for running Large Language Models locally. We tested VRAM capacity, inference speed, and value for money across NVIDIA’s RTX lineup from budget RTX 3060 to flagship RTX 4090.

-

Best GPUs for Dual and Multi GPU AI LLM Setups 2026

Looking to build a multi-GPU AI system? We tested 12 GPUs from consumer RTX cards to enterprise H100 NVL. Discover which GPUs excel at LLM training and inference with NVLink support and high VRAM capacity.