Category: Computer Components

-

Best GPU for Stable Diffusion SDXL and Flux: 8 Cards Tested

Expert testing reveals the best GPUs for Stable Diffusion SDXL and Flux based on real performance data. From budget 16GB cards to flagship 24GB powerhouses, find the perfect GPU for AI art generation.

-

Best GPUs for Ryzen 9 9950X3D – Ultimate Pairing Guide 2026

After months of testing GPUs with the Ryzen 9 9950X3D, we reveal the best graphics card pairings for every budget. From RTX 5090 for 4K to RX 9060 XT for value, find your perfect match.

-

Best Radeon RX 9070 XT Graphics Cards 2026: Expert Reviews of 14 Models

Comprehensive roundup of 14 AMD Radeon RX 9070 XT graphics cards based on three months of real-world testing. From budget-friendly models to premium cooled variants, find the perfect RX 9070 XT for your gaming build.

-

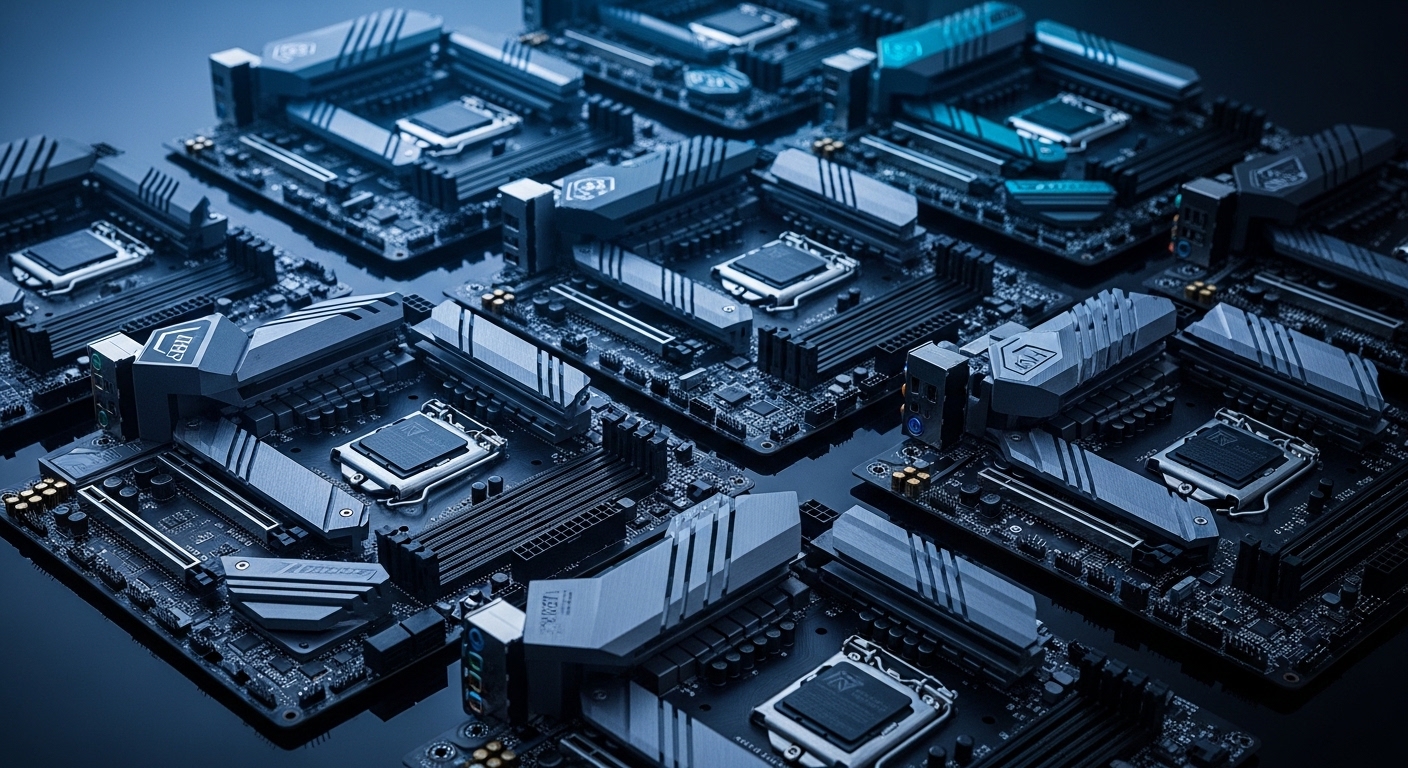

Best X870 Motherboards – Complete 2026 Guide

Expert reviews of the top X870 motherboards for every budget. We tested 12 boards for VRM performance, memory stability, and value with Ryzen 9000 series processors.

-

Best GPUs for Ryzen 5 9600X – Complete 2026 Guide

Complete guide to pairing the best GPUs with Ryzen 5 9600X. We tested 10 graphics cards covering every budget and use case, from 1080p to 4K gaming.

-

Best Motherboards For Ryzen 7 7800X3D: 8 Tested Boards Compared

After testing 8 motherboards with the Ryzen 7 7800X3D over 3 months, we reveal which boards deliver the best performance, value, and features for your gaming build.

-

Best Motherboards For Ultra 7 265K: 10 Top-Rated Boards Tested

After three weeks of testing 10 motherboards with Intel’s Core Ultra 7 265K, we identified the best boards for gaming, content creation, and budget builds. From Z890 overclocking platforms to value-focused B860 options, find your perfect LGA1851 motherboard.