Category: How To

-

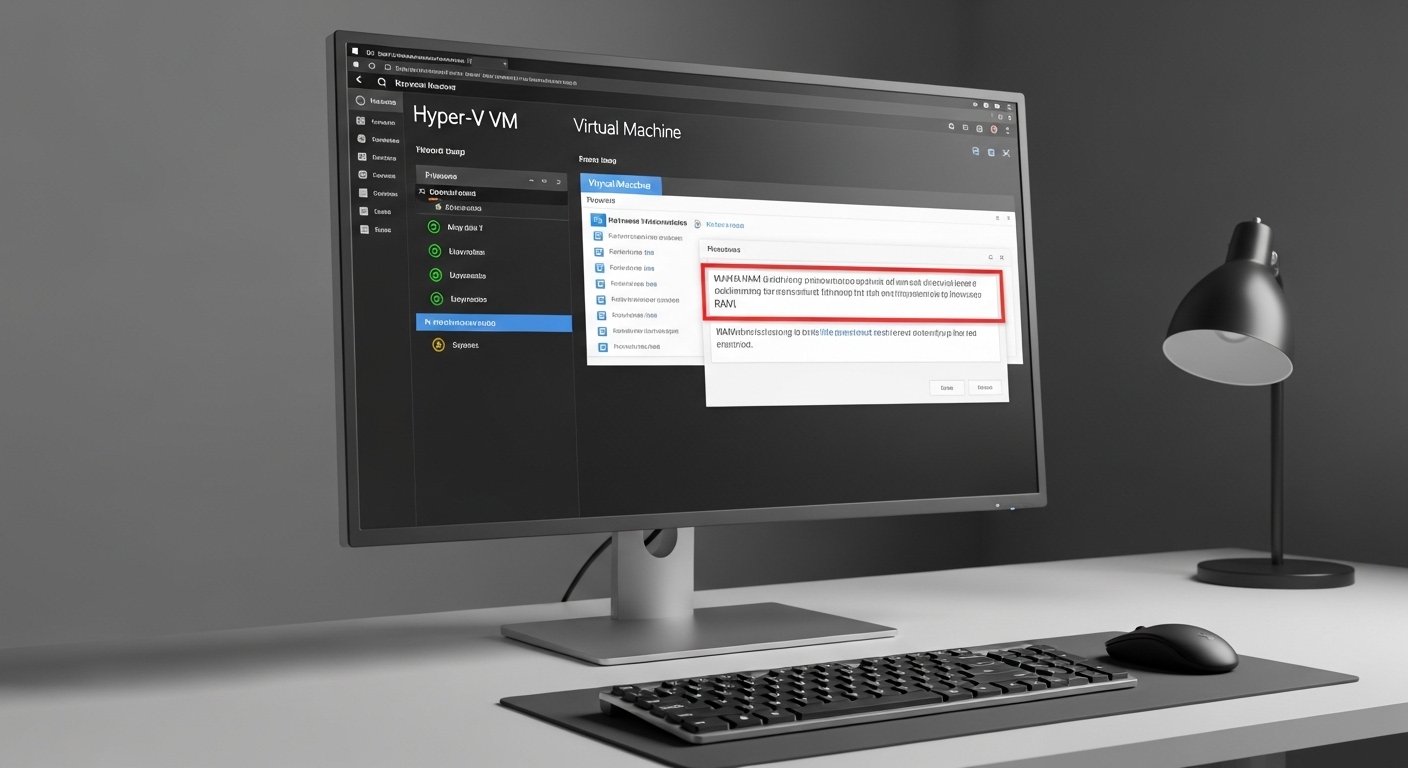

How To Fix The Unable To Allocate RAM Error In Hyper-V VMs

Complete guide to fixing the unable to allocate RAM error in Hyper-V virtual machines. Learn 7 proven solutions from Dynamic Memory configuration to advanced PowerShell troubleshooting.

-

Why Does The Windows CMD Terminal Randomly Freeze

Complete troubleshooting guide to fix Windows CMD terminal freezing issues. Learn the 7 common causes and 12 proven solutions, from quick fixes to advanced diagnostics.

-

How To See GPU VRAM Usage On Linux Nvidia And AMD Cards

Complete guide to monitoring GPU VRAM usage on Linux. Covers nvidia-smi for Nvidia GPUs, rocm-smi for AMD GPUs, real-time monitoring tools, automation scripts, and troubleshooting common issues.

-

Local AI Generated Music ComfyUI ACE Step Setup Tutorial

Complete step-by-step tutorial for setting up ACE (Audio Conditioned Encoder) in ComfyUI to generate AI music locally. Learn system requirements, installation process, and workflow creation.

-

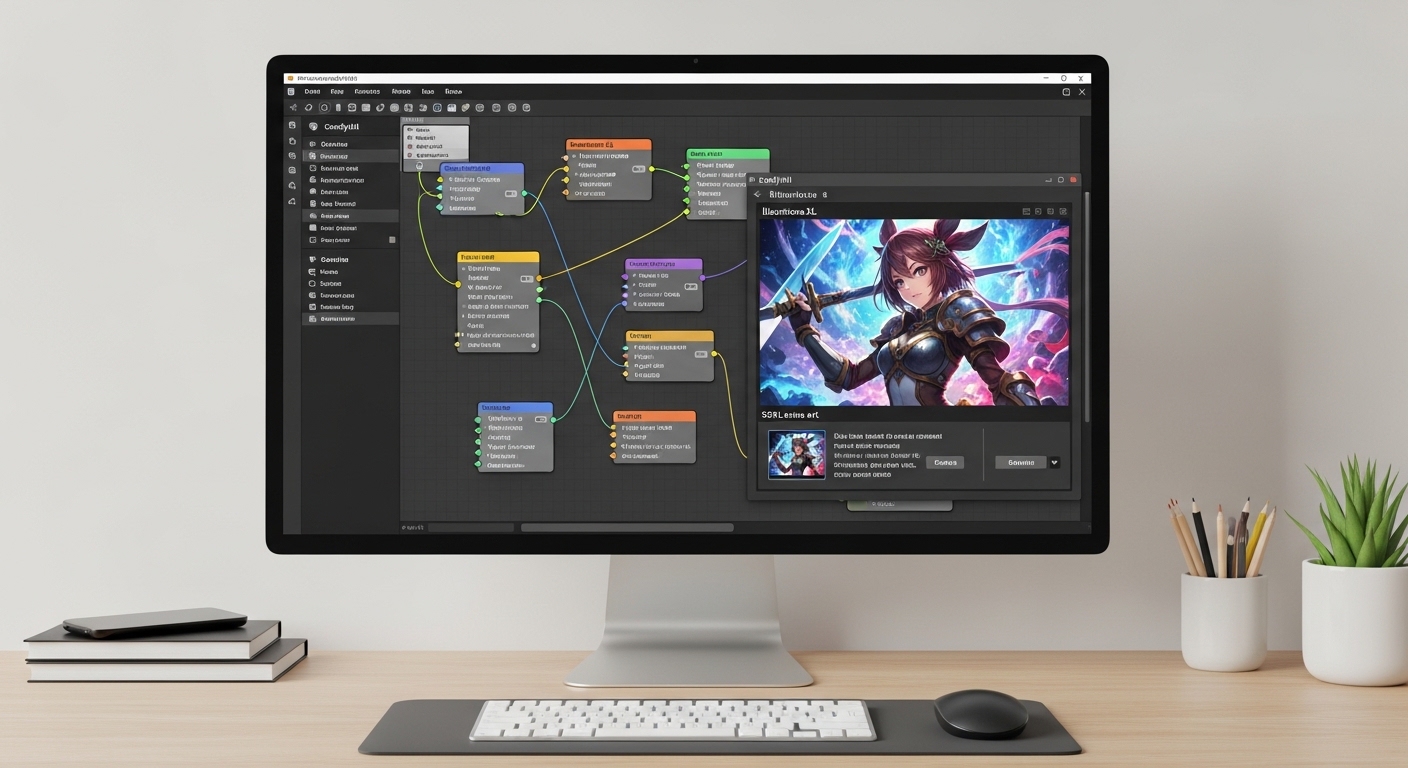

Illustrious XL ComfyUI SDXL Anime Guide For Beginners

Step-by-step beginner’s guide to generating stunning anime artwork with Illustrious XL SDXL model in ComfyUI. Learn installation, workflow setup, prompts, and troubleshooting.

-

How to Browse TikTok Without Account or App (Complete 2026 Guide)

Complete guide to browsing TikTok without an account or app. Learn how to use TikTok Web, third-party viewers, and privacy tools to watch videos anonymously.

-

Booru Style Tagging SDXL Anime Prompts Guide 2026

Complete guide to booru style tagging for SDXL anime prompts. Learn the structured tagging system with 15+ copy-paste examples, tag syntax rules, and advanced techniques for consistent anime art generation.

-

How To Search For Telegram Groups Chats And Channels 2026

Master Telegram search with proven methods to find quality groups, chats, and channels. Learn built-in search tricks, directory websites, Google operators, and safety tips to avoid spam communities.

-

Best Local LLM Software for NVIDIA and AMD GPUs in 2026

Comprehensive comparison of 12 local LLM software options for NVIDIA and AMD GPUs. Tested across RTX 3060, RTX 3090, RX 6800 XT, and RX 7900 XT with specific performance notes for each platform.