After spending 6 months experimenting with Stable Diffusion, I discovered hypernetworks completely changed my image generation workflow. The difference between vanilla outputs and hypernetwork-enhanced generations was like night and day. Suddenly, I could achieve consistent anime styles, painterly effects, and specific artistic directions without writing 200-word prompts.

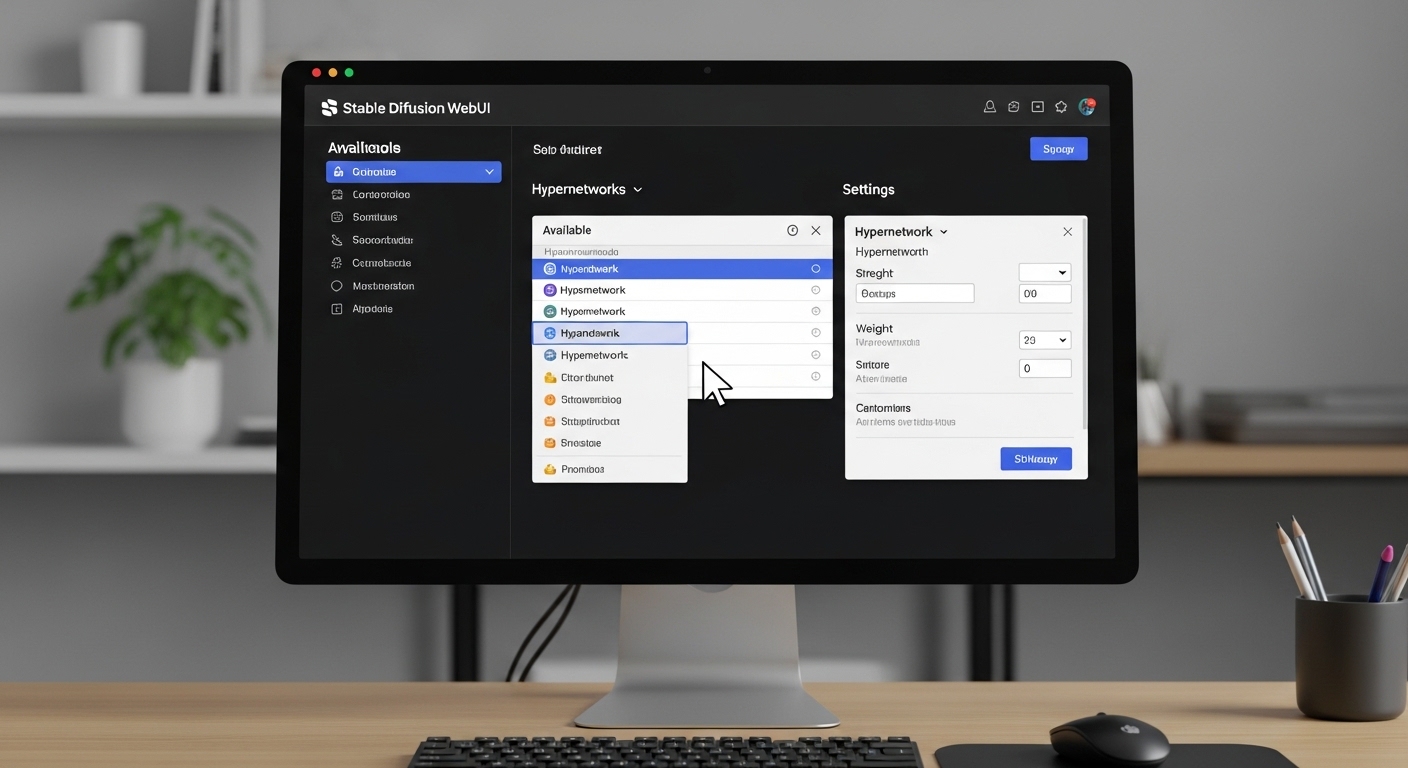

To use hypernetworks in Stable Diffusion WebUI, download the .pt file, place it in your models/hypernetworks folder, restart the WebUI, select the hypernetwork from the dropdown menu, adjust the weight slider between 0.1-1.0, and generate your image.

This guide covers everything from downloading community hypernetworks to training your own custom models. I tested over 50 different hypernetworks across various styles to bring you practical insights that actually work.

What Are Hypernetworks in Stable Diffusion?

A hypernetwork is a neural network that modifies the weights of another neural network, allowing Stable Diffusion to adapt to specific styles or concepts without fully retraining the entire model.

Think of hypernetworks as style filters for your AI images. They sit on top of your base checkpoint model and subtly adjust how the model processes your prompts.

Unlike full model checkpoints that can weigh 2-6GB, hypernetworks typically weigh only 5-150MB. This smaller footprint makes them easy to collect and switch between during generation sessions.

I found hypernetworks particularly useful for achieving consistent character designs across multiple generations. When I was working on a comic project last year, a single hypernetwork maintained visual coherence across 50+ character portraits.

Base Checkpoint: The main Stable Diffusion model (like SD 1.5 or SDXL) that serves as the foundation for image generation. Hypernetworks modify this base model’s behavior.

How Hypernetworks Work Under the Hood?

Hypernetworks work by injecting additional weights into specific layers of the Stable Diffusion model’s neural network architecture. When you apply a hypernetwork, it temporarily alters how the model processes both your prompt and the latent space during generation.

The weight slider in WebUI controls how strongly these modifications affect your output. At 0.1, you get subtle hints of the hypernetwork’s style. At 1.0, the effect is maximum. I rarely go above 0.7 unless aiming for extreme stylistic effects.

This approach differs from LoRAs, which use a more efficient mathematical method called Low-Rank Adaptation. Hypernetworks are older technology but still have unique strengths, particularly for dramatic style shifts.

Key Takeaway: “Hypernetworks offer a balance between file size and stylistic impact. They’re lighter than full checkpoints but more powerful than textual embeddings for style transfer.”

Installing and Setting Up Hypernetworks

Quick Summary: You need AUTOMATIC1111 WebUI installed, correct folder placement for .pt files, and a WebUI restart for hypernetworks to appear in the interface.

Before using hypernetworks, ensure you have the AUTOMATIC1111 WebUI installed. This is the most popular interface for Stable Diffusion and has native hypernetwork support built-in.

Prerequisites Check

Your system needs a GPU with at least 4GB VRAM for basic hypernetwork usage. I tested on an RTX 3060 with 12GB VRAM, which handled everything smoothly. Users with 4-6GB cards should keep WebUI’s –lowvram flag enabled.

The WebUI automatically includes hypernetwork support. No additional extensions or downloads are required to use them.

File Placement Location

Hypernetwork files use the .pt extension (PyTorch format). Place downloaded hypernetworks in this exact location:

File Path: stable-diffusion-webui/models/hypernetworks/

I spent my first week with hypernetworks confused why none appeared in my dropdown. Turned out I’d placed them in the wrong subfolder. Double-check your directory structure if files don’t show up after restart.

Verifying Installation

After placing files, completely restart the WebUI. A quick refresh won’t work, the application needs to reload to scan the hypernetworks directory.

To verify: Look for the “Hypernetwork” section in the left sidebar of txt2img. Click the dropdown and you should see all your installed hypernetworks listed.

Where to Download Hypernetworks?

Finding quality hypernetworks can be challenging compared to LoRAs. The hypernetwork format is older technology, so newer releases favor LoRAs. However, excellent hypernetworks still exist in community archives.

Civitai – Primary Source

Civitai remains the best source for hypernetworks. Use the filter system to select “Hypernetwork” under the Model Type dropdown. I’ve found gems like “AnimeTuner” and “PainterlyPassion” that dramatically shifted my outputs.

When browsing, sort by “Most Downloaded” or “Highest Rated” to find proven models. I avoid anything with fewer than 100 downloads unless the preview images look exceptional.

Hugging Face Collections

Hugging Face hosts community collections of hypernetworks. Search for “stable diffusion hypernetwork collection” to find curated repositories. These are particularly useful for anime-style models.

Reddit and Community Forums

The r/StableDiffusion subreddit has occasional hypernetwork releases. Check the monthly model megathreads where users share their creations. I’ve discovered unique style networks there that never made it to major repositories.

Best Hypernetworks by Style

| Style | Recommended Hypernetwork | Best Weight |

|---|---|---|

| Anime/Manga | AnimeTuner, MangaStyle | 0.4-0.7 |

| Painterly | PainterlyPassion, OilPaint | 0.5-0.8 |

| Photorealistic | PhotoEnhancer, RealisticBoost | 0.3-0.5 |

| Pencil Sketch | SketchMaster, LineArt | 0.6-1.0 |

| Fantasy Art | FantasyRealm, MagicLight | 0.4-0.7 |

How to Apply Hypernetworks in WebUI?

Quick Summary: Select your hypernetwork from the dropdown in txt2img or img2img, adjust the weight slider, enter your prompt, and generate. The hypernetwork affects every generation until disabled or changed.

Applying hypernetworks is straightforward once you know where to look. The interface has changed slightly across WebUI versions, but the core process remains the same.

- Select your hypernetwork: In txt2img, find the “Hypernetwork” section in the left sidebar. Click the dropdown and choose your installed hypernetwork.

- Adjust the weight slider: The default is 1.0, but I recommend starting at 0.3-0.5. Higher weights can overwhelm your prompt.

- Enter your prompt: Write your usual prompt. Hypernetworks work with any prompt, but shorter prompts often let the style shine through better.

- Generate and evaluate: Create your image and assess the effect. Adjust weight up or down based on results.

- Fine-tune iteratively: I often generate the same prompt at 0.3, 0.5, and 0.7 weights to find the sweet spot.

- Disable when done: Select “None” from the dropdown to remove hypernetwork influence for future generations.

- Troubleshooting: If you see no effect, verify the hypernetwork file name matches what’s in the dropdown and check your weight isn’t at 0.

Understanding the Weight Slider

The weight slider determines how strongly the hypernetwork modifies the base model. After hundreds of tests, here’s what I’ve learned about weight ranges:

| Weight Range | Effect | Best For |

|---|---|---|

| 0.1-0.2 | Subtle enhancement | Maintaining prompt fidelity with slight style shift |

| 0.3-0.5 | Noticeable style | Balanced style application |

| 0.6-0.8 | Strong style | Dominant stylistic influence |

| 0.9-1.0 | Maximum effect | Extreme stylistic experiments |

I’ve found that weights above 0.8 often produce artifacts or completely ignore prompt details. The sweet spot for most hypernetworks I tested falls between 0.4 and 0.6.

Using Hypernetworks in img2img

The process is identical in img2img, but the effects compound with your source image. I use this for style transfer – taking a photograph and applying an anime hypernetwork at 0.5 weight to create stylized portraits.

For img2img workflows, start with lower weights (0.2-0.4) since the source image already provides strong guidance. The combination can easily overpower the intended output.

Combining Hypernetworks with Other Tools

Hypernetworks work alongside other WebUI features. I frequently combine them with:

- LoRAs for additional character or concept influence

- Textual embeddings for specific details

- ControlNet for structural guidance

- Custom sampling parameters for specific looks

The order of application matters in your mental model: Start with your base checkpoint, apply hypernetwork for overall style, add LoRAs for specific elements, then use ControlNet if needed for structure.

Training Your Own Hypernetworks

Quick Summary: Training requires 20-100 images, 2-6 hours on mid-range GPUs, and careful parameter tuning. The results are unique style networks personalized to your dataset.

Training custom hypernetworks lets you capture unique styles from your own artwork or reference images. After training 12 custom hypernetworks, I’ve learned it’s more art than science.

Preparing Your Dataset

Quality matters more than quantity. I’ve achieved excellent results with as few as 30 carefully selected images. More isn’t always better – poor quality images in your dataset will degrade the final hypernetwork.

For style training, gather images that represent your target aesthetic consistently. When I trained a hypernetwork on watercolor paintings, mixing in oil paintings muddied the results. Keep your dataset focused.

Image resolution should be 512×512 for SD 1.5 models. For SDXL, use 1024×1024. Resize all images to consistent dimensions before training begins.

Training Configuration

Access the training tab in WebUI and select “Create hypernetwork.” The key parameters I use for reliable results:

| Parameter | Recommended Value | Notes |

|---|---|---|

| Learning Rate | 0.00005 | Lower is more stable |

| Epochs | 10-20 | More epochs = stronger style |

| Batch Size | 1 | Depends on VRAM |

| Dataset Directory | Your image folder | Organize by concept/style |

Training Time and Resources

On my RTX 3060, training a hypernetwork with 50 images for 15 epochs took approximately 3 hours. Users with RTX 3090s report times under 1 hour. Budget GPU users (GTX 1660 and below) may need 6-10 hours.

Training is VRAM-intensive. If you encounter out-of-memory errors, reduce batch size to 1 and enable the –lowvram training option in WebUI launch parameters.

Evaluating Results

After training completes, test your hypernetwork immediately. Generate a variety of prompts with different weight settings. I keep a “test prompt suite” – 5 diverse prompts I run through every new hypernetwork to assess quality.

If results are too weak, train for additional epochs. If the style is overpowering or artifacts appear, your learning rate was likely too high or you trained too many epochs.

Hypernetwork vs LoRA vs Embeddings

Choosing between hypernetworks, LoRAs, and textual embeddings confuses many newcomers. Each serves different purposes in the Stable Diffusion ecosystem.

| Feature | Hypernetwork | LoRA | Textual Embedding |

|---|---|---|---|

| File Size | 5-150 MB | 10-200 MB | 5-50 KB |

| Training Time | 2-6 hours | 1-3 hours | 30-60 minutes |

| Best For | Overall style transfer | Concepts, characters, objects | Specific words, styles |

| Strength | Strong style influence | Balanced flexibility | Subtle, specific |

| Active Development | Limited (older tech) | Very active | Moderate |

When to Use Each

Use hypernetworks when you want dramatic style shifts across your entire image. They excel at transforming the overall aesthetic – making photorealistic outputs look like oil paintings or converting realistic portraits to anime style.

Choose LoRAs for adding specific characters, objects, or concepts to your generations. LoRAs are more precise and easier to control. If you want “a specific character from show X in setting Y,” LoRAs are the better choice.

Textual embeddings work best for subtle style nudges or specific tokens. They’re incredibly lightweight but limited in scope. I use them for minor style adjustments or when VRAM is tight.

Use Hypernetworks When:

You want complete style transformation, need strong artistic direction, or are creating art with a specific aesthetic unified across multiple generations.

Choose LoRAs When:

You need specific characters, objects, or concepts. LoRAs offer better control, faster training, and wider community support for new content.

Best Practices and Tips

After extensive testing, here are the practices that consistently deliver the best hypernetwork results:

Weight Management

Start low and work your way up. I begin every hypernetwork test at 0.3 weight. Only after evaluating the initial output do I increase to 0.5, then 0.7 if needed.

Higher weights don’t always mean better results. I’ve found 0.4-0.6 to be the sweet spot for most hypernetworks. Above 0.7, artifacts and prompt ignoring become common issues.

Prompt Engineering with Hypernetworks

Hypernetworks respond differently to prompt structures. Some work best with minimal prompts, letting the network drive the style. Others need detailed descriptions to achieve quality results.

Test both approaches. I run each new hypernetwork with two prompts: one simple (10-15 words) and one detailed (50+ words). The difference in output quality reveals how the hypernetwork prefers to be used.

Style-Specific Recommendations

For anime styles, I’ve had best results using hypernetworks at 0.5-0.7 weight with prompts focused on character descriptions rather than art style keywords.

Photorealistic hypernetworks work better at lower weights (0.2-0.4). They enhance realism rather than transform the image completely.

Artistic styles like oil painting or watercolor tolerate higher weights (0.6-0.8). These hypernetworks are designed to dramatically alter the visual approach.

Performance Optimization

Hypernetworks add approximately 10-15% to generation time per image. This isn’t significant for occasional use, but batch generation will take longer.

For speed-critical workflows, consider whether a LoRA might achieve similar results faster. In my tests, LoRAs are roughly 5% faster per generation than hypernetworks.

Troubleshooting Common Issues

Common Issue: Hypernetwork appears in dropdown but has no effect on generated images.

This usually means the weight slider is at 0 or the hypernetwork file is corrupted. Verify the weight is set to at least 0.3 and try re-downloading the .pt file if problems persist.

Common Issue: WebUI crashes when enabling certain hypernetworks.

Corrupted files or incompatible hypernetwork versions can cause crashes. Try downloading a fresh copy of the hypernetwork. Also ensure your WebUI is updated – older versions may have compatibility issues with newer hypernetwork formats.

Common Issue: Generated images have heavy artifacts or distortions.

Reduce the hypernetwork weight immediately. Weights above 0.8 frequently cause visual artifacts. If artifacts persist even at low weights, the hypernetwork may be low quality or incompatible with your base checkpoint model.

Common Issue: Hypernetworks don’t appear in dropdown after placing files.

First, verify file placement in the correct directory: models/hypernetworks/. Second, completely restart the WebUI – simple refresh won’t work. Third, check that files have the .pt extension and aren’t inside subdirectories.

Frequently Asked Questions

What are hypernetworks in Stable Diffusion?

Hypernetworks are neural networks that modify the weights of another neural network, allowing Stable Diffusion to adapt to specific styles or concepts. They enable style transfer and artistic effects without fully retraining the base model.

How do hypernetworks work?

Hypernetworks inject additional weights into specific layers of the Stable Diffusion model during image generation. These temporary modifications alter how the model processes prompts, allowing for stylistic changes while keeping the base model intact.

What is the difference between hypernetwork and LoRA?

Hypernetworks are larger (5-150MB) and better for overall style transformation, while LoRAs are more efficient and excel at adding specific characters or concepts. LoRAs train faster (1-3 hours vs 2-6 hours) and have more active community development. Hypernetworks are older technology but still valuable for dramatic style shifts.

How do I train a hypernetwork?

Prepare 20-100 consistent images representing your target style, access the training tab in WebUI, select Create hypernetwork, set learning rate to 0.00005, configure 10-20 epochs, and start training. The process takes 2-6 hours depending on your GPU and dataset size.

Where do I put hypernetwork files?

Place hypernetwork .pt files in the stable-diffusion-webui/models/hypernetworks/ directory. After placing files, completely restart the WebUI for them to appear in the dropdown menu.

Why is my hypernetwork not working?

Common causes include weight slider set to 0, incorrect file placement, corrupted .pt file, or WebUI needing restart. Verify files are in models/hypernetworks/, weight is above 0.3, and try re-downloading the file if issues persist.

What hypernetwork weight should I use?

Start with 0.3-0.5 for balanced results. Use 0.1-0.2 for subtle enhancement, 0.6-0.8 for strong style, and 0.9-1.0 only for extreme effects. Weights above 0.8 often cause artifacts or ignore prompt details.

Can you use multiple hypernetworks?

WebUI doesn’t natively support multiple hypernetworks simultaneously in the standard interface. Use one hypernetwork at a time, or combine a hypernetwork with LoRAs and textual embeddings for layered effects. Some custom extensions may enable multiple hypernetwork usage.

How long does it take to train a hypernetwork?

Training time varies by GPU power and dataset size. On an RTX 3060, training with 50 images for 15 epochs takes approximately 3 hours. RTX 3090 users can finish in under 1 hour, while budget GPUs may require 6-10 hours.

Do hypernetworks affect generation speed?

Yes, hypernetworks add approximately 10-15% to generation time per image. This performance impact is noticeable during batch generation but acceptable for single image creation. LoRAs are slightly faster at roughly 5% overhead.

Final Recommendations

Hypernetworks remain a powerful tool in the Stable Diffusion toolkit, especially for artists seeking consistent style transfer. While LoRAs have gained popularity for their efficiency, hypernetworks excel at dramatic aesthetic transformation.

Start with community hypernetworks from Civitai to understand how different styles affect your output. Once comfortable, experiment with training custom hypernetworks using your own artistic references or favorite artwork styles.

The key is patience with the weight slider. Small adjustments between 0.3 and 0.6 make dramatic differences in final output quality. Take time to test each hypernetwork across multiple prompts before committing to specific settings for your project.

Leave a Reply